In a recent article, I wrote about using Markov Chains and OpenAI's GPT-2 to generate text. After writing that article, I wondered: how can I use the GPT-2 models to train my own AI writer to mimic someone else's writing?

I didn't have to search for too long. Using these fascinating and helpful articles on creating poetry and writing JPop lyrics, I was able to quickly set the scripts up to create an AI writer.

With the help of this article, you will be able to create your own AI program to write articles. Of course, when I say AI, I am building upon the fantastic work of the OpenAI team and nshepperd, an anonymous programmer who made it very easy to re-train the OpenAI models. Let me also clarify that we aren't building a new deep learning model, but re-training the GPT-2 models on our chosen text.

For this article, I chose to work Seth Godin's blog posts. I had found his book Linchpin: Are You Indispensable and Permission Marketing very insightful. I unsubscribed to his newsletter after it became repetitive. A few of my friends still enjoy Seth's blog posts, so I thought I would try to mimic his writing -- and generate wisdom. I call my writer: godinator.

Let's get started then.

Things you need

- R and RStudio to get the articles from Seth's blog

- Google Cloud Compute to run and re-train the GPT-2 models

- This can get costly unless you qualify for the free tier.

- Access to a powerful computer (if you want to run the programs locally)

- Familiarity with SSH and shell commands

- Some Python knowledge

Get Data

Warning and disclaimer:

I don't condone stealing anyone's intellectual property. I provide the following scripts to teach you how to code and become proficient. I am not liable for your misuse of Seth's writing.

Seth's blog uses javascript to show archival content. I spent many hours trying to figure out the XPath or CSS extractors to get to the articles. I then decided to get the links from the blog's sitemap, which lists all the links on a site to help crawlers index the text.

I took these the steps to get the articles and save them in text files:

- Get all the links to articles on the blog

- Extract the article, date, and title from a blog post

- Save each article as a separate text file

Here's how:

Load our favorite libraries

library(stringr) # to manipulate strings

library(dplyr) # for easier data manipulation

library(readr) # to read write files

library(rvest) # to get data from the web

Get the sitemap links

Since there are multiple sitemaps on the main sitemap page, I needed to extract all the links. Luckily, the sitemap XML files use "loc" as an identifier for the URLs.

sitemap_links <- sitemap_master_page %>%

html_nodes(xpath = "//loc") %>%

html_text()

Then we need to get all the links from all the sitemaps. This function will take a sitemap file and store the links in a data frame.

get_blog_post_links <- function(sitemap_url) {

sitemap_html <- read_html(sitemap_url)

sitemap_links <- sitemap_html %>%

html_nodes(xpath = "//loc") %>%

html_text() %>%

as.data.frame(stringsAsFactors = FALSE) %>%

setNames(., "link")

sitemap_links %>%

filter(str_detect(string = link, pattern = "[0-9]{4}/[0-2]{2}"))

}

We will then run the get_blog_post_links function through all the sitemap links we found in the index sitemap.

all_article_links <- lapply(sitemap_links, get_blog_post_links) %>%

bind_rows()

Get and save the articles

The blog post set up is very simple on this blog. It has a tag of has-content-area. We need to look for this tag and get the text inside this tag.

I wrote (ahm, copied from Django) to make sure the created files had proper and valid names.

# https://github.com/django/django/blob/master/django/utils/text.py

get_valid_filename <- function(str) {

str <- str_trim(string = str) %>%

str_replace_all(pattern = ' ', replacement = '-') %>%

str_replace_all(pattern = '(?u)[^-\\w.]', replacement = '') %>%

str_replace_all(pattern = "\\.", replacement = "")

return(str)

}

Now to the article extractor.

This code is straightforward. For every URL, it searches for the h2 tag and saves it as the blog title. Searches for has-content-area tag and saves it as the body. It also searches for the date tag for the article date. Finally, it generates a valid file name and saves the article. For some reason, I was getting an unnecessary empty line at the end of the document. I had the open the file again, remove the line, and save it again. I also added a two-second delay not to be seen as an attacker on the blog's server.

Then I let the program run overnight.

fetch_articles <- function(article_url) {

indiv_article_html <- read_html(article_url)

article_title <- indiv_article_html %>%

html_node(xpath = "//h2//a") %>%

html_text()

article_body <- indiv_article_html %>%

html_nodes(xpath = '//*[contains(concat( " ", @class, " " ), concat( " ", "has-content-area", " " ))]//p') %>%

html_text(trim = TRUE)

article_date <- indiv_article_html %>%

html_node(xpath = '//*[contains(concat( " ", @class, " " ), concat( " ", "date", " " ))]') %>%

html_text() %>%

as.Date(., format = "%B %d, %Y")

file_name <- paste0('/Users/ashutosh/Documents/godin_articles/',

get_valid_filename(article_title),

"_",

article_date,

".txt")

cat(

c(article_title, article_body),

file = file_name,

sep = "\n\n"

)

system(command = paste("truncate -s -1", file_name))

print(paste("Processsed", article_url, "saved here", file_name))

Sys.sleep(2)

return(invisible())

}

lapply(all_article_links$link,

fetch_articles)

The next morning, I saw the program had terminated: either I was kicked out from the server, or my computer went into the sleep mode. Fortunately, the program had saved more than 2,700 articles out of the 2,900+ articles from the blog. That was good enough for me.

Moving on to the cloud

There was no way my old computer would have handled the resource demands of these huge deep learning models. For the next part, I used Google's Cloud Compute services. Check out this wonderful article on getting started on Google cloud.

Here are some general steps to run a virtual machine instance on Google cloud, which, as an aside, I found easier to use than Amazon's cloud services.

Create a VM Instance

Once you have created your Google cloud account and logged in the Google Compute Engine area, click on Create Instance.

Get the Deep Learning VM deployment from the marketplace. This VM comes with all the Python and Tensorflow packages needed to train deep learning models.

Fill in the details in the next Window and select "us-west1-b" as the zone. The GPU resources aren't available in other zones. You will have to request to increase the quota because by default no one gets a GPU. You should get that GPU in less than 24 hours.

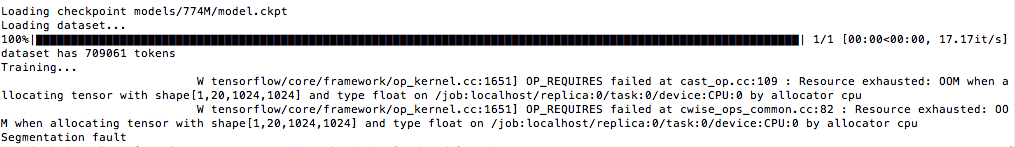

Initially, I had selected n1-highmem-4 with 4vCPU and 26GB memory, but I got out of memory errors with that machine, so I changed the machine to n1-highmem-8 with 8vCPU and 52GB memory -- without losing my data! The wonders of modern technology!

Once you hit create (or launch), you should see that Google will launch a new instance for you.

Get Google Cloud SDK

You will have an easier time if you have the Google Cloud SDK installed on your local machine. It makes connecting to the cloud VM easy as well as transferring files back and forth.

curl https://sdk.cloud.google.com | bash

exec -l $SHELL

gcloud init

gcloud auth login

You will then sign in with your Google account and create SSH keys to connect to your VM and project.

Get the connect to SSH command

You will see a small dropdown under SSH in your list of instances. Click on it to get the gcloud command.

The gcloud command will look something like:

gcloud beta compute --project ssh --zone "us-west1-b"

Once you are authenticated to the VM from your local machine, you are ready to move on to the next steps of re-training the GPT-2 models on the VM.

Get the scripts supporting GPT-2

While connected to your VM, now, via SSH, you will issue some commands to get the scripts and the models.

Get nshepperd's scripts and functions

wget https://github.com/nshepperd/gpt-2/archive/finetuning.zip

Unzip the code files

unzip finetuning.zip

Rename the extracted folder

mv gpt-2-finetuning gpt-2

Add the local user path to the PATH environment variable

PATH=/home//.local/bin:$PATH

Download all the requirements needed for GPT-2.

pip3 install --user -r gpt-2/requirements.txt

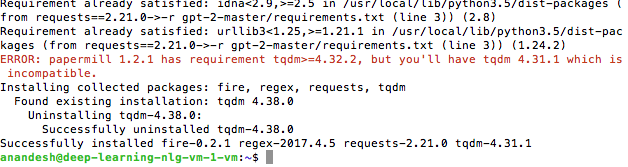

You may get an error about a library or two. Read those carefully and reinstall if required. In my case, I had to update the tqdm library.

pip3 install --upgrade --user tqdm

I also got an error later on regarding the toposort library. I installed it using the user argument.

pip3 install --user toposort

Get the GPT-2 models

Change to the gpt-2 directory and download the GPT-2 models.

cd gpt-2/

I was very optimistic and very excited about the largest GPT-2 model OpenAI had released recently. This model has 1.5 billion hyperparameters and can generate very humanlike text.

One billion parameters!

I will save you some time: I couldn't fit these models with my 52GB memory. I thought I would try the next one, the 774M one. Nope. Fail.

Finally, I had success with the 355M model.

Download the 355M model with this command:

python3 download_model.py 355M

Encode and train the 355M model

The first step before we re-train the GPT-2 355M model is to encode the articles. We do so with this command:

PYTHONPATH=src ./encode.py --model_name='355M' src/articles src/articles_encoded355.npz

The next step is to re-train the 355M model with Godin's articles. Since this process will go on for a long time, I advise you to activate a screen, so you can quit out of SSH and log in again whenever you want to check the progress. I did not do this in the beginning and my computer would sleep and kill the SSH session.

You start a screen with this command:

screen -S godinator

Now, you will be on a separate screen (session), which you can detach and resume.

We are now ready to start the re-training.

PYTHONPATH=src ./train.py --dataset src/articles_encoded355.npz --model_name='355M'

When you start the training, you will see some errors regarding TensorFlow's functions, but thankfully, they are warnings and not errors.

In 30 seconds or so, you will see the first training step will be completed. You will keep seeing updates after every step, but the models won't be saved until 1,000 steps. We will be read to the samples after every 100 steps though -- so, that's awesome to see how the text progresses.

Now, you can detach the session by hitting Control + A and pressing d. You will see a message that you are detached from the screen.

You can check all the screens with the screen -ls command.

To go back to the screen, you have to copy the exact screen name, which will be in this format: digits.godinator

In my case, it was 20371.godinator, so to resume, I used this command:

screen -r 20371.godinator

Once the model has been training at least 100 steps, you should see a sample generated in the samples/run1 directory. You don't need to resume your screen session to read these samples. Just connect to the VM using the SSH command and navigate to the directory. I used vim to read the samples like this:

vi samples/run1/samples-100

To get out of vim, you have to enter ":q!"

This was my first sample at 100 steps:

First Sample

"You know you're going too bad,"

Right away, you can see that GPT-2 is creating mostly coherent sentences. It got the opening quote right as many of Godin's articles start with a quote. It also got a very short sentence-paragraph right: an inspirational punchy-line. "It starts with you."

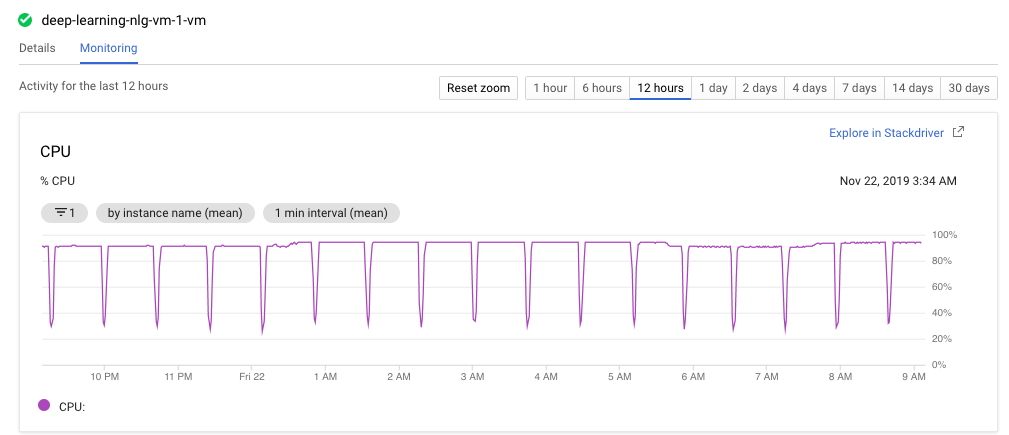

I let the program run for a few days. As you can see from the CPU chart below, the scripts used almost 100% of the CPU for most of the time. The dips you see are when GPT-2 is generating a new sample. I had set an alert on Google to let me know when the bill got closer to and over $100. I spent about $60 on this training. 🙁

Here's a sample after 5,000 steps:

5,000 Steps Sample

The three riffs

I feel like there are some things that make sense and could even be profound like this paragraph: "Something bigger because you're giving people a new lease of life, giving them permission to do things they thought were off limits, to take initiative, to make connections, to fly without a permit, to make a difference." But there are quite a few misses.

At 6,000 steps:

6,000 Steps Sample

Is everyone an artist?

LOL. I love the snide comments: "people are just average people". I think the program is understanding Godin's way of explaining things, but this text feels off.

I stopped the program after 8,100 steps. I downloaded the trained models to my local computer so that I could generate more samples without incurring more charges from Google.

gcloud beta compute scp --recurse --zone=us-west1-b :~/gpt-2/checkpoint/run1 ~/Documents/godinator-learnedmodels

After downloading these trained models from the Google cloud VM, I stopped the instance.

I also repeated the steps from the beginning to download the GPT-2 355M model as well as nshepperd's scripts.

Then I created a new folder in my /gpt-2/models folder called godinator.

I moved all the files from the godinator-learnedmodels directory to /gpt-2/models/godinator

I also copied the following files from /gpt-2/models/355M to /gpt-2/models/godinator

- encoder.json

- hparams.json

- vocab.bpe

Now, I was ready to run a local natural language generator a.k.a my AI writer.

There are two ways of letting the AI write articles: 1) unconditional writing, meaning the AI program will keep writing stories until we stop it (by Control + C) or specify the number of samples, and 2) interactive prompt, in which we feed some information to the program to generate new text based on this entered text.

I generated unconditional samples with this command:

python3 ./src/generate_unconditional_samples.py --temperature 0.8 --top_k 40 --nsamples 5 --model_name godinator > gen_samples.txt

It created five samples and saved those to the gen_samples.txt text file. Although you hear the computer heating up, fortunately, the program terminates successfully and generates new stories.

The way the deep layers of the neural networks can generate new information and write coherent (mostly) surprises and impresses me. In one of the samples, I read that the program created a fake YouTube star complete with a pseudonym and a real name. It gave a back story to how this person became a star! I had to search for his name to make sure it wasn't mentioned in the training data. Google found nothing! Nothing!

Here are some other samples I found intriguing.

The two sides

The two sides

Agenda

Agenda

Dreams

Dreams

Conclusion

- Training and re-training are resource intensive. You need to spend a good amount of resources to be able to train even the "smaller" model with 355M parameters.

- This smaller model generates impressive, surprising, and sometimes profound text. But none of the essays I read are publication-ready. A good editor, however, can turn these articles into gold. Perhaps, the larger model is as scary as OpenAI initially claimed.

- The best part: everyone, even beginners, can take part in re-training, finetuning these powerful natural language generators.

What do you think? What uses of this technology do you see?

oh that’s a great article is it made by AI (“just joking”) great!

bhai i just try to made nlp model where i have to predict base_score on basis of patient review but when i saw counts of base_score it was about

1300 for 32000 patient all this base score is a float value like 8.2569856555 and some base_score is about 250 time but so many about 8000 are even less than 50 times this is not seam a typical nlp where i have to predict only 10 different values please if you can help please i tried a lot but did’t do great

Great article! I was wondering what the size and specs of your local computer were to run the GPT2?

My initial thought was to try at the 774 on a larger instance collect the learned models and incorporate them into my local 335M machine. Would that be a viable option in your opinion?

Yes, Erik, that’s similar to what I did. I trained the models on Google cloud and brought down the trained models to my local computer. I don’t remember, but I think I had difficulties training the 774 model.